A Guest post by Dr Michael Munnik, a Lecturer in the Centre for the Study of Islam at Cardiff University. On twitter he is @michaelmunnik

When I designed a new module on Religion and the News for Cardiff University’s department of religion and theology, I knew I would have a few hurdles to overcome. As religion students, they were unlikely to have learned any media theory already. Also, the dense theoretical concepts would be a step up for them. Finally, they would have to build a habit of reading the news.

This is a general problem: with social media hand-delivering news through spaces such as Facebook and Twitter, we’re less compelled to seek a range of news. Newspapers, magazines and broadcasters all need a web presence, so fewer of us have the habit of flipping through the pages and discovering what’s going on.

Things were different when I started my first degree, back in 1998. But by whatever means we got our news back then, I was under more acute pressure. I was studying journalism degree, so there were no excuses: I had to follow the news. Every day.

To ensure I and my classmates acquired this habit, we had a weekly news quiz in our J100 intro module. This was old school: our quiz was printed on sheets of paper (!) and we wrote our answers in. The quizzes were summative, too. It was a small but essential portion of our mark.

I didn’t feel comfortable setting a news quiz for my non-journalism students that would bear marks, but I did want to encourage the practice of news consumption. A formative quiz, focused on news about religion, could still raise their awareness. It might also help them identify stories to write their summative assessments on.

If I could make the quiz low-stress and even enjoyable for the students, it was likely to compensate for the lack of ‘mandatory marks’ as a way of securing their interest and participation. I wanted something easy and engaging – so I turned to mobile devices.

And the news quizzes begin @jschool_cu with our new group of smart, talented aspiring journalists. pic.twitter.com/9CaNQIkBne

— Carleton J-School (@JSchool_CU) 6 September 2016

Even my alma mater is using tech to deliver the good ol’ news quiz.

Our college’s learning technologist threw some options at me, and after a bit of trial and error, I settled on PollEverywhere. Clickers were too limited – I wanted the flexibility to use short-answer questions. Twitter seemed diffuse, difficult to manage, and more public than I wanted. And Google Forms just didn’t have the user-ready interface. (Believe me, since doing this project and sharing it with others, I’ve learned of a lot more options.)

Though PollEverywhere is commercial software, a higher education account gets you 40 users for free. My module had a roll of 52, so I asked students to double up. I am a late-comer to smartphones anyway (I only started using one in May), and I worried about accessibility: was it fair for me to set a class activity that demanded students have laptops or smartphones? Putting students in pairs alleviated my fears that not everybody could take part, though this fear was pretty misplaced, as it turned out.

Other things that I liked: I had some control over the display of results. Not perfect, but more or less right. Importantly, students could answer blind with a timer going; once the timer stopped, I unlocked the answers, and a word-cloud or a graph would appear with their answers. There was a nice bit of theatre to this, and it allowed me to ‘teach through’ the right answers.

Over the week, I bookmarked links to news stories about religion. Friday afternoons, I would spend about an hour writing the questions. I aimed for nine to eleven each week, including sub-questions when merited. Monday morning in class, I would write the link on the blackboard (I did say I’m old-school). Students typed it in, and bang – the first question appeared.

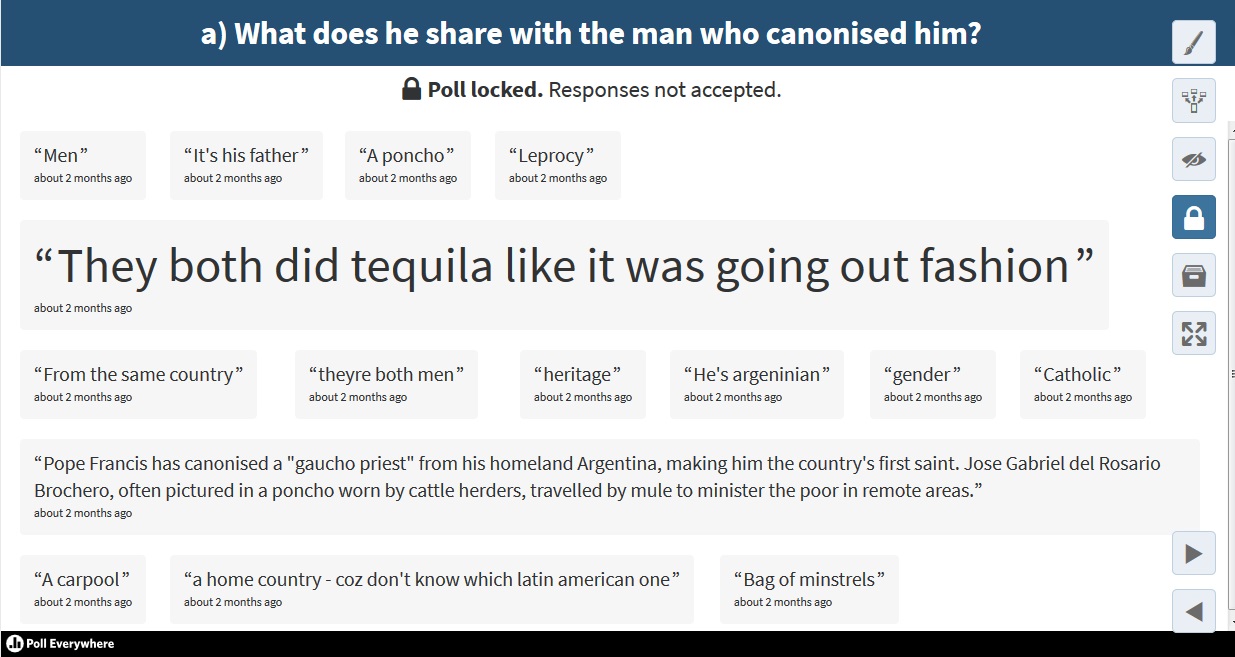

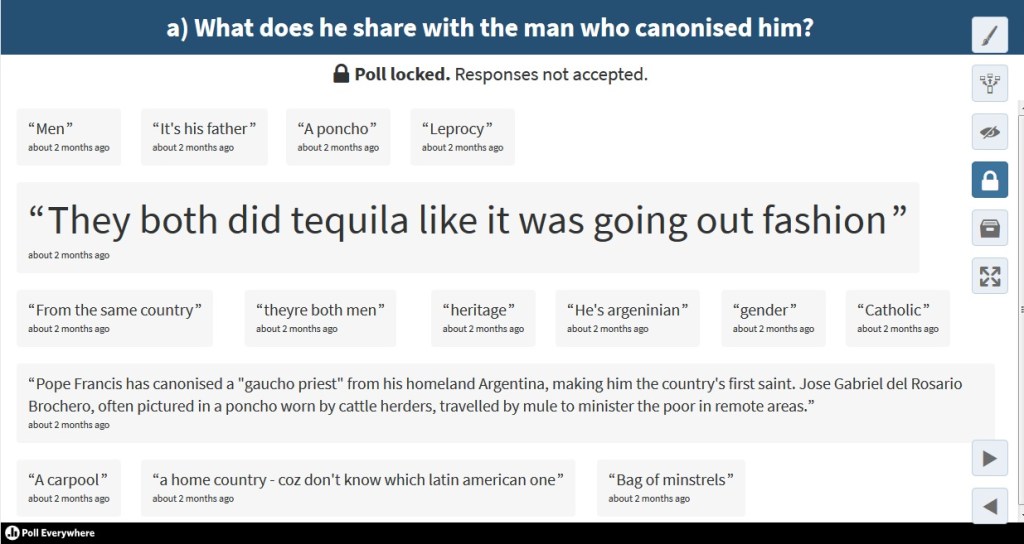

It seemed to go well. Students took part, trying for a right answer or gamely confessing they didn’t know. Sometimes they took the opportunity to be clever, and I didn’t mind this, although I can see the potential for it to get out of control. In one instance, I had asked ‘Who was the Gaucho Priest?’ (An Argentinian priest who had recently been canonised by Pope Francis.) Somehow, I got distracted, and the timer hadn’t stopped, so the question hadn’t closed. That meant students had ages to find out the right answer. Really, I couldn’t prevent them from googling an answer given that I wanted them to provide that answer on a personal device equipped for googling. Besides insisting on the honour system or pub-quiz rules, the timer was my only defence. They were feeling a bit sparky, then, when the sub-question came: ‘What does he share with the man who canonised him?’ You can see the clever replies, including one who copied and pasted the first two sentences of the story from bbc.co.uk

It seemed to go well. Students took part, trying for a right answer or gamely confessing they didn’t know. Sometimes they took the opportunity to be clever, and I didn’t mind this, although I can see the potential for it to get out of control. In one instance, I had asked ‘Who was the Gaucho Priest?’ (An Argentinian priest who had recently been canonised by Pope Francis.) Somehow, I got distracted, and the timer hadn’t stopped, so the question hadn’t closed. That meant students had ages to find out the right answer. Really, I couldn’t prevent them from googling an answer given that I wanted them to provide that answer on a personal device equipped for googling. Besides insisting on the honour system or pub-quiz rules, the timer was my only defence. They were feeling a bit sparky, then, when the sub-question came: ‘What does he share with the man who canonised him?’ You can see the clever replies, including one who copied and pasted the first two sentences of the story from bbc.co.uk

PollEverywhere does provide an opportunity to screen answers before publishing and reject inappropriate ones, but you have to subscribe for this function.

Looking back at the results from the module, multiple choice questions had a higher response rate than short answer ones. I also noted that the first time I ran the quiz, I had an average of 20.1 responses, with a peak of 29. By the last week of the quiz, the average number of responses was 6.5. One question had no responses at all. Perhaps students were bored with it; perhaps they were distracted by Christmas break and the impending exams. Either way, participation tailed off.

Half-way through the semester, though, I took their temperature on the quiz. I added some evaluation questions using PollEverywhere itself. Responses were actually higher than for the quiz questions themselves (no timer), and they were almost uniformly positive. They liked the innovation in teaching and the chance to use mobile tech, and they gently suggested that access to devices really is not an issue.

They were less clear on the pedagogical utility of the quizzes. A quarter disagreed that the quizzes fit well with the rest of the module, and one third said they didn’t see a connection between the quizzes and the summative assessments in the module. That’s too bad and means more explaining on my part this year.

I certainly saw the connection, though. Many of the news articles that students chose to write their summative critical commentaries on were stories that had appeared in the news quiz. As an introduction to the kinds of stories they should write on, the quiz worked. I got a further steer from their year-end evaluations, as six students referred to the quiz unprompted in their free-text comments, all positively. So, I am tweaking and changing and doing more explaining, but I’m running the quiz again this year.

Not only that, I shared my pilot project with colleagues across the university when Cardiff held a conference on teaching innovation this July. Since then, I’ve had invitations to do smaller seminars for inter-department CPD, and colleagues have gotten in touch with me, either to find out how to use it or let me know what they use. A group of us are getting together to see if we can co-ordinate software usage across the university to improve what we offer our students.

Dr Michael Munnik is Lecturer in Social Science Theories and Methods at Cardiff University, working with the Centre for the Study of Islam in the UK and the Department of Religion and Theology. His research examines the engagement of Muslims with the production of news in Britain. Before postgraduate study, he worked as a journalist in public radio in Canada. http://www.cardiff.ac.uk/people/view/153675-munnik-michael

Leave a comment